Hello from The AI Night,

Today in AI:

Anthropic Expands Claude Cowork With Enterprise AI Agents

Meta Signs 6GW GPU Deal With AMD in Major Compute Shift

Cursor's Cloud Agents Can Now Build, Test and Ship Code Autonomously

Image Source: Anthropic blog

Here's the deal: Anthropic released a major Cowork update on Feb 24, letting enterprise admins build private plugin marketplaces and distribute custom AI agents across their organizations. Claude can now also orchestrate tasks end-to-end between Excel and PowerPoint.

The Breakdown:

Admins can create org-specific plugin marketplaces with per-user provisioning, auto-install, and private GitHub repos as plugin sources (private beta).

New unified "Customize" menu consolidates plugins, skills, and connectors (MCPs) in one place.

10+ new role specific plugin templates added, HR, Design, Engineering, Operations, Financial Analysis, Investment Banking, Equity Research, Private Equity and Wealth Management.

New connectors include Google Workspace, Docusign, FactSet, Harvey, S&P Global, LSEG and others.

OpenTelemetry support added for tracking usage, costs, and tool activity.

Cross-app Excel-to-PowerPoint workflow is in early research preview for all paid plans.

The bigger picture: This positions Cowork as an enterprise agent distribution layer not just a chat tool. The plugin marketplace model lets companies standardize AI workflows by department which could accelerate adoption among non-technical teams at scale.

Image Source: Meta blog

Here's the deal: Meta announced a multi-year agreement with AMD to deploy AMD Instinct GPUs across its AI infrastructure, scaling up to 6GW of compute capacity. The deal covers silicon, systems, and software alignment across both companies' roadmaps.

The Breakdown:

First GPU shipments are set to begin in the second half of 2026 built on the Helios rack-scale architecture co-developed with AMD.

The agreement spans Instinct GPUs, EPYC CPUs, and rack-scale AI systems, with vertical integration across Meta's infrastructure stack.

This falls under Meta's broader "Meta Compute" initiative which combines hardware from multiple partners alongside its in-house MTIA silicon program.

Meta frames this as a diversification play, reducing reliance on a single GPU supplier while scaling for what it calls "personal superintelligence".

AMD CEO Lisa Su called it a "multi-generation collaboration", positioning AMD at the center of global AI infrastructure buildout.

The bigger picture: This is one of the largest publicly announced GPU procurement deals outside of Nvidia's ecosystem. It signals Meta is serious about supplier diversification which could reshape bargaining dynamics across the AI chip market.

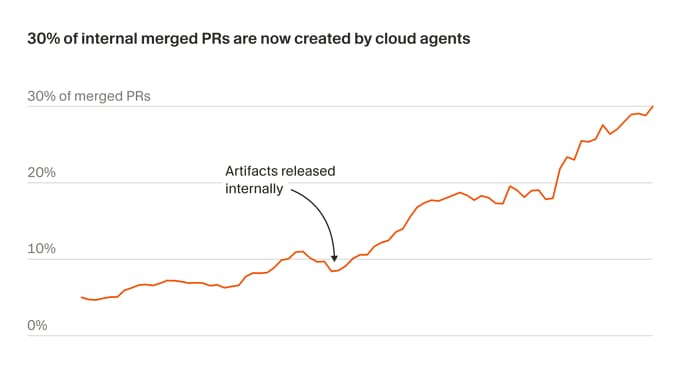

Image Source: Cursor blog

Here's the deal: Cursor launched a new version of cloud agents that run in isolated virtual machines with full development environments. Each agent can build software, interact with desktop applications, browse the web and produce artifacts like videos, screenshots and logs to verify its own work.

The Breakdown:

Cloud agents operate in sandboxed VMs, removing resource conflicts with local machines and enabling parallel execution of multiple agents.

Agents can generate merge-ready PRs, rebase onto main, resolve conflicts, and squash commits independently.

30% of merged PRs at Cursor are now created by these autonomous cloud agents.

Accessible from web, mobile, desktop, Slack and GitHub.

Internal use cases include building features, reproducing security vulnerabilities, UI testing and handling quick fixes.

Agents record their own screen sessions so developers can validate work without checking out branches locally.

Users can also take over the agent's remote desktop to interact directly with modified software.

The bigger picture: Cloud sandboxes solve a core infrastructure bottleneck, local agents can't self-test or run in parallel without competing for resources. By giving each agent an isolated VM with full desktop access, Cursor pushes coding agents from "generate a diff" toward autonomous software delivery, shifting the developer role toward direction-setting and review.

What else you need to know:

Google Labs upgraded Opal, its no-code AI workflow builder with an autonomous agent step that selects and calls tools like Veo and web search plus new memory, dynamic routing and interactive chat capabilities.

Inception Labs launched Mercury 2, a diffusion-based reasoning LLM generating 1,009 tokens per second on NVIDIA Blackwell GPUs, priced at $0.25 per million input tokens with 128K context support.

Red Hat and NVIDIA launched "Red Hat AI Factory," a co-engineered platform combining both companies' AI enterprise software to streamline large-scale AI inference, model tuning and agent deployment across hybrid cloud environments.

Intuit and Anthropic announced a multi-year partnership letting mid-market businesses build custom AI agents using Claude's Agent SDK on Intuit's platform while embedding Intuit's financial tools directly into Claude products.

Notion launched Custom Agents that autonomously handle tasks like Q&A, request triaging and status reports across Slack, Mail, Calendar and MCP integrations, free until May 4 when credit-based pricing begins.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.