AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents, not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore. They're your product's first interview with the machines deciding whether to recommend you.

That means: clear schema markup so agents can parse your content, real benchmarks instead of marketing fluff, open endpoints agents can actually test, and honest comparisons that emphasize strengths without hype.

Mintlify powers documentation for over 20,000 companies, reaching 100M+ people every year. We just raised a $45M Series B led by @a16z and @SalesforceVC to build the knowledge layer for the agent era.

Hello from The AI Night,

Today in AI:

Anthropic Launches Computer Use to Claude Code

Google Launches Veo 3.1 Lite With 50% Lower Cost Than Veo 3.1 Fast

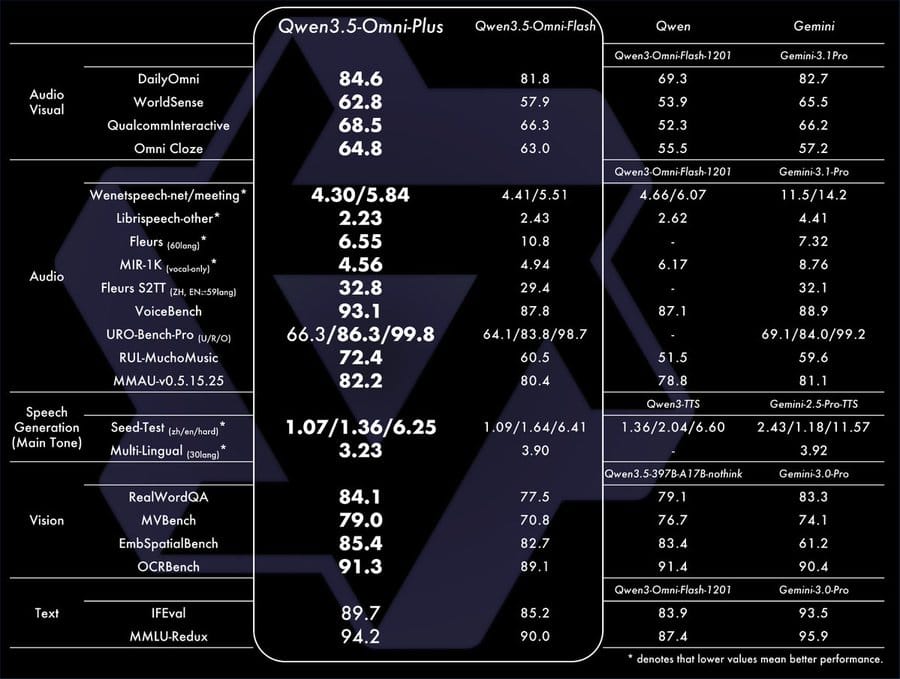

Alibaba Launches Qwen3.5-Omni With 256K Context, Voice Cloning and SOTA Audio-Visual Performance

Here's the deal: Anthropic launches “Computer Use” to Claude Code in research preview. Claude can write Swift code, compile it, launch the app, click every button, catch a visual bug, fix it and confirm the fix. It works on anything you can open on your Mac. Available now as a research preview on Pro and Max plans.

The Breakdown:

Covers native macOS apps, local Electron builds, iOS Simulator and GUI-only tools that have no API or CLI equivalent.

Claude prioritizes precision; it uses MCP servers, Bash, or the Chrome extension first and only falls back to screen control when no other tool fits.

Requires macOS, Claude Code v2.1.85+ and an interactive session. Team, Enterprise and non-interactive mode are excluded.

Every app requires explicit per-session approval. High-access tools like terminals, Finder and System Settings trigger additional warnings.

One session locks the machine. Press Esc from anywhere to instantly stop Claude, restore hidden apps and reclaim full control.

The bigger picture: Developers building native or GUI-heavy apps have always had to leave the terminal to manually click, resize, and screenshot. That loop is now closed. Claude handles the full build-debug-verify cycle in one conversation cutting the slowest part of native app development: the manual testing no one automates.

Here's the deal: Google released Veo 3.1 Lite, a new video generation model designed for high-volume developer use. It costs less than 50% of Veo 3.1 Fast while matching its speed. On April 7, Google will also cut pricing on Veo 3.1 Fast.

The Breakdown:

Supports Text-to-Video and Image-to-Video generation.

Outputs in 720p and 1080p, with landscape (16:9) and portrait (9:16) framing.

Developers can set clip duration at 4, 6 or 8 seconds with pricing scaling accordingly.

Available now via paid tier on the Gemini API and Google AI Studio.

Rounds out the Veo 3.1 model family alongside the existing Fast tier.

Upcoming Veo 3.1 Fast price reduction adds further cost flexibility starting April 7.

The bigger picture: This opens video generation to developers building at scale where per-clip cost was previously a blocker. A tiered pricing model across Lite and Fast lets teams match quality and budget to specific use cases, from rapid prototyping to production pipelines.

Here's the deal: Alibaba released Qwen3.5-Omni, the latest generation of its omnimodal large language model. It comes in three sizes (Plus, Flash, Light) supports 256k context input and is available now via Offline and Realtime APIs.

The Breakdown:

Handles over 10 hours of audio input and 400+ seconds of 720P audio-visual content at 1 FPS.

Pretrained natively on 100M+ hours of audio-visual data.

Supports speech recognition in 113 languages/dialects and speech generation in 36.

Qwen3.5-Omni-Plus hit SOTA on 215 audio and audio-visual benchmarks, surpassing Gemini 3.1 Pro on general audio understanding, reasoning, recognition, translation and dialogue.

New capabilities include voice cloning, semantic interruption (filters out background noise and backchanneling), native WebSearch and function calling in real time and end to end voice control for speed volume and emotion.

Introduces "Audio-Visual Vibe Coding," where the model writes code from audio-visual instructions.

Uses ARIA (Adaptive Rate Interleave Alignment) to fix speech instability issues like number mispronunciation during streaming.

The bigger picture: This closes a real gap in multimodal AI. Builders working on voice agents, real-time assistants or multilingual audio applications now have an open-weight option that rivals Gemini 3.1 Pro across audio tasks with production ready APIs for both offline and real-time use.

What else you need to know:

Manus launched "My Computer," a desktop app for macOS and Windows that lets its AI agent execute terminal commands on local machines to manage files, build apps and automate workflows with user approval.

Axios v1.14.1, one of npm's most depended-on packages with 100M+ weekly downloads was hit by an active supply chain attack injecting malware through a fake dependency called plain-crypto-js.

Figma is rolling out four AI image tools across FigJam, Buzz, and Slides, including expand image, isolate object, erase object and vectorize image for built-in editing without external software.

Google Maps is expanding its AI-powered EV battery prediction and charging stop recommendations to over 350 Android Auto models across 15+ brands in the U.S., using real-time traffic, elevation and weather data.

Zai released AutoClaw, a fully local version of OpenClaw that runs on-device without an API key, supporting custom models or its GLM-5-Turbo for tool-calling tasks.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.