Hello from The AI Night,

Today in AI:

Anthropic Launches Routines in Claude Code

Lovable Adds One-Prompt Payment Integration

MiniMax Releases Self-Building Open-Weight M2.7

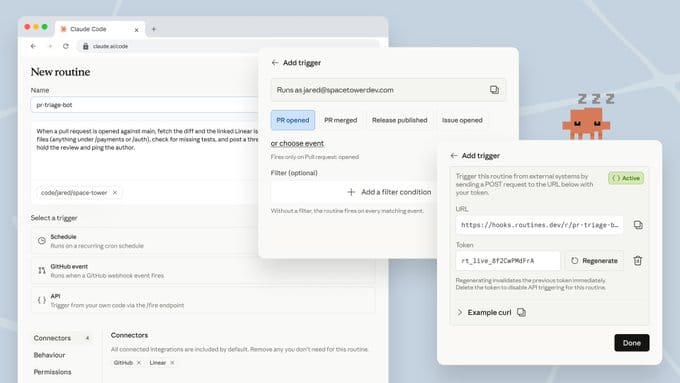

Here's the deal: Anthropic launched routines in Claude Code in research preview. A routine is a preconfigured automation with a prompt, repo access, and connectors that runs on a schedule, via API call, or in response to GitHub events, all on cloud infrastructure.

The Breakdown:

Three trigger types: scheduled (hourly, nightly, weekly), API (each routine gets its own endpoint and auth token), and GitHub webhooks (fires per PR matching your filters).

Scheduled routines handle tasks like nightly bug triage, issue labeling, and docs drift detection across merged PRs.

API routines plug into deploy pipelines, alerting tools like Datadog, and internal dashboards to run smoke checks or draft fixes automatically.

GitHub routines can port changes across SDKs or run bespoke code review checklists with inline comments before human review.

Available on Pro, Max, Team, and Enterprise plans with daily caps: 5, 15, and 25 routine runs respectively.

Extra runs beyond limits require extra usage credits.

CLI users can type /schedule to create scheduled routines directly.

The bigger picture: Every engineering team has a list of automations they know they should build but never do. Nightly triage bots, cross-repo sync scripts, deploy smoke checks. They stay undone because the setup cost never feels worth it. Anthropic just turned that setup into a single CLI command. The teams that adopt this early will save hours every week while competitors keep doing the same manual work without realizing how much time it costs them.

Here's the deal: Lovable launched Lovable Payments, a native feature that lets users add payment processing to any Lovable-built app through a conversational prompt. The system supports both Paddle and Stripe as payment providers.

The Breakdown:

Users describe what they want to sell. Lovable handles account creation, checkout flow, product setup, and UI components automatically.

Paddle serves as a Merchant of Record option, managing payments, tax, billing, and compliance across 200+ countries.

Stripe is also available, with support for 125+ local payment methods across 150+ countries.

Includes a built-in test environment with test cards before going live.

A Payments tab surfaces revenue analytics, transaction history, refunds, and a go-live checklist.

Requires a paid Lovable plan (Pro or higher) and Lovable Cloud for webhooks.

The bigger picture: Building an app with AI takes hours now. Getting paid for it still took weeks of Stripe docs, tax setup, and checkout debugging. That gap is where most solo founders quit. Lovable just removed the last wall between "I built something" and "I'm making money from it." Every vibe-coding platform that doesn't match this will watch its users ship free projects forever and never convert to real businesses.

LLM traffic converts 3× better than Google search

58% of buyers now start their research in ChatGPT or Gemini, not Google. Most startups aren't showing up there yet.

The ones that are get cited by the AI tools their buyers, investors, and future hires already use. And they convert at 3×.

Download the free AEO Playbook for Startups from HubSpot and get the exact steps to start showing up. Five minutes to read.

Here's the deal: MiniMax released M2.7 as an open-weight model. It is the first in the M2 series where the model actively participated in its own development cycle. MiniMax M2.7 was used internally to run reinforcement learning experiments, update its own memory, build skills, and iterate on its own agent harness over 100+ autonomous optimization rounds. The company says M2.7 handled 30%-50% of its own development workflow.

The Breakdown:

Scored 56.22% on SWE-Pro, matching GPT-5.3-Codex and approaching Opus 4.6 levels.

Hit 55.6% on VIBE-Pro for end-to-end project delivery and 57.0% on Terminal Bench 2.

Achieved ELO 1495 on GDPval-AA, highest among open-source models for professional task delivery.

Maintains 97% skill adherence across 40+ complex skills exceeding 2,000 tokens each.

On MLE Bench Lite, averaged a 66.6% medal rate across three 24-hour autonomous runs.

Open-sourced OpenRoom, an interactive agent demo, alongside API and agent platform access.

The bigger picture: A model that handles half of its own development is not just a benchmark story. It means the cost and time to train the next version drops with every cycle. Open-source teams now have access to a model that can improve its own tooling without waiting for the lab to ship updates. If self-improving loops become standard, the gap between open and closed models starts shrinking faster than anyone expected.

What else you need to know:

Google DeepMind released Gemini Robotics-ER 1.6, upgrading spatial reasoning, multi-view success detection, and instrument reading for robots, achieving 93% accuracy on gauge reading with agentic vision via the Gemini API.

NVIDIA released Ising, an open source AI model family for quantum computing that delivers up to 2.5x faster decoding and 3x higher accuracy than the current industry standard pyMatching.

Cursor 3.1 added a tiled layout for running multiple agents in parallel, upgraded voice input with batch transcription, and enabled branch selection before launching cloud agents.

Vercel open-sourced Open Agents, a reference platform for building cloud coding agents, designed to help companies create customized internal "AI software factories" with their own workflows and integrations.

Skye, a new startup, launched a beta agentic iPhone home screen that uses ambient context awareness to surface personalized weather, meeting prep, email drafts, and local recommendations automatically.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

3 ways to support us:

Forward this to your AI-curious friend → https://www.theainight.com

Sponsor The AI Night and reach 500+ AI builders daily → passionfroot.me/theainight

Reply to this email — I read every response