Hello from The AI Night,

Today in AI:

Anthropic Raises $30B at $380B Valuation

OpenAI launches GPT-5.3-Codex-Spark, smaller version of GPT-5.3-Codex

MiniMax Launches an Open-Source Frontier Model M2.5 Which Competes Opus 4.6

Image Source: Anthropic blog

Here's the deal: Anthropic closed a $30 billion Series G led by GIC and Coatue, with co leads including D.E. Shaw Ventures, Founders Fund and MGX. The round values the company at $380 billion post money and investment will fund frontier research, product development and infrastructure expansion.

The Breakdown:

Run rate revenue hit $14 billion, growing over 10x annually for three consecutive years

Customers spending $100K+ annually grew 7x in the past year, over 500 customers now spend $1M+ annually, up from about “a dozen” two years ago

Eight of the Fortune 10 use Claude

Claude Code run rate revenue reached $2.5 billion and has more than doubled since early 2026. Enterprise use now represents over half of Claude Code revenue

Claude remains the only frontier model available across AWS Bedrock, Google Cloud Vertex AI and Microsoft Azure Foundry

Round includes portions of previously announced investments from Microsoft and NVIDIA

The bigger picture: This positions Anthropic as the best capitalized independent AI lab, with enterprise traction that now rivals major SaaS companies. The multi cloud and multi chip strategy reduces lock in risk for enterprise buyers, which could accelerate adoption further.

Image Source: OpenAI blog

Here's the deal: OpenAI released a research preview of GPT-5.3-Codex Spark, a smaller version of GPT-5.3-Codex optimized for near instant interactive coding. It runs on Cerebras' Wafer Scale Engine 3 and is available now for ChatGPT Pro users.

The Breakdown:

Delivers 1,000+ tokens/sec with a 128k context window (text only at launch)

Scores 58.4% on Terminal Bench 2.0 vs. 77.3% for full GPT-5.3-Codex, but completes tasks in a fraction of the time

End to end pipeline improvements cut per roundtrip overhead by 80%, per token overhead by 30%, and time to first token by 50%

Persistent WebSocket connections enabled by default for Spark, rolling out to all models soon

Separate rate limits during the preview,API access limited to select design partners initially

First in a planned family of ultra fast models with larger versions, longer context and multimodal input coming

The bigger picture: This signals a shift toward two mode coding agents, deep autonomous reasoning for complex tasks and real time collaboration for rapid iteration. For developers, it means AI pair programming that actually keeps up with the speed of thought.

Image Source: MiniMax blog

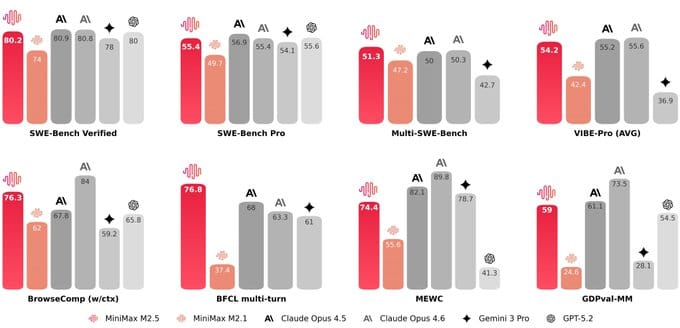

Here's the deal: MiniMax released M2.5, an open source reasoning model trained with large scale reinforcement learning across hundreds of thousands of real world environments. It targets coding, agentic tool use, search and office productivity.

The Breakdown:

Scores 80.2% on SWE-Bench Verified, 51.3% on Multi-SWE-Bench, and 76.3% on BrowseComp

Outperforms Claude Opus 4.6 on SWE-Bench using both Droid (79.7 vs 78.9) and OpenCode (76.1 vs 75.9) harnesses

Runs at 100 tokens/second, roughly 2x faster than other frontier models

Costs $0.3/M input and $2.4/M output tokens (Lightning version), about 1/10th to 1/20th the price of Opus, Gemini 3 Pro or GPT-5

Running it continuously for one hour costs just $1

Completes SWE-Bench tasks 37% faster than its predecessor M2.1, matching Opus 4.6 speed at 10% of the cost

Trained on 10+ programming languages across full-stack development workflows

Already handles 30% of MiniMax's internal tasks with 80% of new code commits generated by the model

The bigger picture: M2.5 makes frontier level agentic coding essentially free to run at scale. For builders shipping AI-powered dev tools or autonomous agents, the cost barrier just dropped dramatically.

What else you need to know:

Anthropic partnered with CodePath to integrate Claude and Claude Code into its coding curriculum, giving 20,000+ students at community colleges, state schools and HBCUs access to frontier AI development tools.

OpenAI retired GPT-4o, GPT-4.1, GPT-4.1 mini and o4-mini from ChatGPT on February 13 2026 while API access remained unchanged as only 0.1% of users were still choosing GPT-4o daily.

Mooncake joined the PyTorch Ecosystem, integrating KVCache transfer and storage with SGLang, vLLM and TensorRT-LLM to solve memory bottlenecks in large language model serving.

Cloudflare launched Markdown for Agents, automatically converting HTML to markdown when AI crawlers request it via Accept headers, cutting token usage by up to 80% for enabled sites.

Brave released API Skills for developer tools like Cursor and Claude Code plus a built-in API Assistant trained to help developers integrate its search endpoints into AI applications.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.