Hello from The AI Night,

Today in AI:

Claude's 1M Token Context Window Goes Live at No Extra Cost

Google Introduces Natural Disaster Predictor “Groundsource”

Claude Can Now Build Interactive Charts and Diagrams

Here's the deal: Anthropic has removed the long-context surcharge and beta requirements for its 1M token context window on Claude Opus 4.6 and Sonnet 4.6. Standard per-token pricing now applies across the full window, and media limits have jumped from 100 to 600 images or PDF pages per request.

The Breakdown:

No long-context multiplier. A 900K-token request costs the same per-token rate as a 9K one. Opus 4.6 runs at $5/$25 per million tokens, Sonnet 4.6 at $3/$15.

Full rate limits apply at every context length. No throughput penalty for longer inputs.

Available on Claude Platform, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Azure Foundry.

Claude Code users on Max, Team, and Enterprise plans get 1M context by default with Opus 4.6, reducing compactions automatically.

Opus 4.6 scores 78.3% on MRCR v2, the highest among frontier models at that context length.

No beta header required. Existing headers are simply ignored.

The bigger picture: This eliminates the cost and engineering friction that made long-context impractical at scale. Teams working with full codebases, large document sets, or extended agent traces can now skip lossy summarization and context-clearing workarounds entirely.

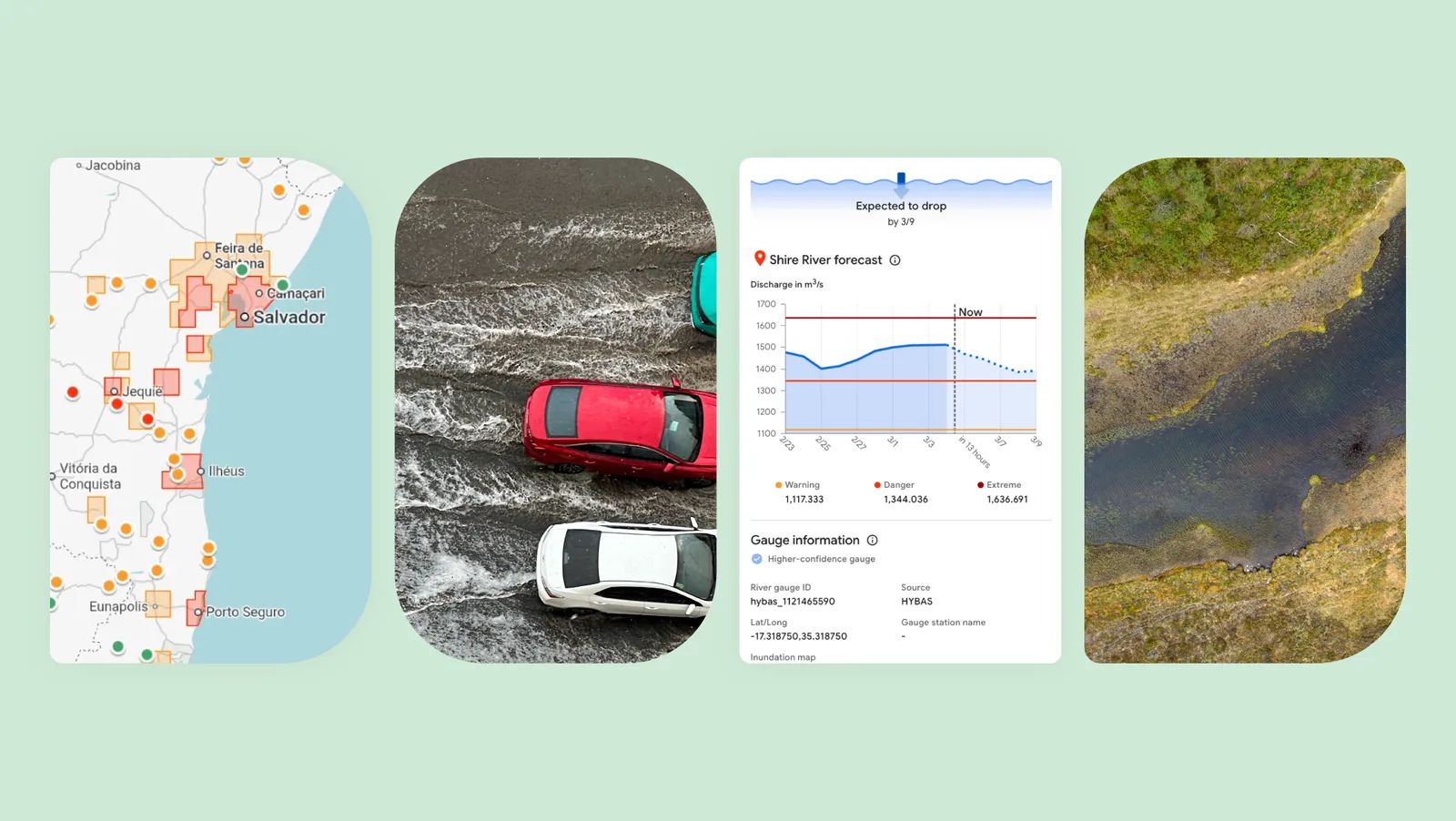

Here’s the deal: Google introduced Groundsource, a Gemini-powered methodology that converts public reports into structured historical disaster data. The first application targets urban flash floods, a category that previously lacked the high-fidelity data needed to train predictive models.

The Breakdown:

Gemini analyzed decades of public reports and identified over 2.6 million historical flood events across more than 150 countries.

Google Maps was used to assign precise geographic boundaries to each event, creating a dataset specifically focused on urban flash floods.

A new model trained on this dataset can make progress toward predicting urban flash floods up to 24 hours in advance.

Urban flash flood forecasts are now live in Google's Flood Hub alongside existing riverine flood forecasts that cover 2 billion people.

The model and dataset join the Google Earth AI family and are open-source.

The same approach could extend to landslides, heat waves and other natural disasters.

The bigger picture: Urban flash floods have been one of the hardest disasters to forecast because usable historical data did not exist at scale. Groundsource closes that gap and gives researchers and partners an open benchmark to build on, particularly in underserved urban regions.

Here's the deal: Anthropic launched a beta feature that lets Claude generate interactive charts, diagrams, and data visualizations inline within conversations. It is available today on all plans, including free.

The Breakdown:

Claude auto-detects when a visual would explain something better than text and builds it using HTML and SVG, not generated images.

Users can interact with visuals through hover details, clickable elements, and adjustable sliders, then ask Claude to refine them in follow-up messages.

Visuals are ephemeral by default, meaning they change or disappear as conversations evolve, unlike persistent Artifacts.

Currently limited to web and desktop apps; mobile users get text-only responses.

The feature traces back to "Imagine with Claude," an experimental project previewed in fall 2025.

Launch comes three days after OpenAI shipped similar interactive visuals in ChatGPT.

The bigger picture: Interactive visuals inside the chat loop remove friction for anyone explaining processes, exploring data, or learning complex systems. With both Anthropic and OpenAI shipping this capability days apart, inline visualization is becoming table stakes for AI assistants.

AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents—not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore—they're your product's first interview with the machines deciding whether to recommend you.

That means:

→ Clear schema markup so agents can parse your content

→ Real benchmarks, not marketing fluff

→ Open endpoints agents can actually test

→ Honest comparisons that emphasize strengths without hype

In the agentic world, documentation becomes 10x more important. Companies that make their products machine-understandable will win distribution through AI.

What else you need to know:

Anthropic launched the Claude Partner Network with a $100 million commitment, offering enterprise partners technical certification, dedicated support, and joint market development funding for 2026.

Meta unveiled four new MTIA chips built with Broadcom on RISC-V architecture, targeting AI inference and content ranking, with three models shipping between early and late 2027.

Meta plans to cut over 15,800 jobs to help offset $60–65 billion in AI infrastructure spending, continuing a workforce reduction strategy that began with 11,000 layoffs in 2022.

Claude Code added remote-control session spawning, letting users launch new local sessions from the mobile app, currently available to Max, Team, and Enterprise users on version 2.1.74 or higher.

Skiff co-founder Jason Bud is joining xAI and SpaceX alongside co-founder Andrew, citing the company's ambition to build AI from first principles and expand human agency.

Perplexity launched Computer on iOS, enabling users to start and manage agentic tasks across devices with cross-device sync, with Android support coming soon.

Devendra Chaplot, a founding team member at Mistral AI and TML, announced he is joining SpaceX and xAI to work on superintelligence and robotics under Elon Musk.

Nvidia runs a structured higher education program spanning 550+ annual research grants, graduate fellowships, and internships focused on seven domains including robotics, quantum computing, and 6G.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.