Hello from The AI Night,

Today in AI:

Google Launches Gemini 3.1 Flash Live

Meta's TRIBE v2 Turns fMRI Data Into Brain Predictions

Google Launches TurboQuant to Compress LLMs 6x Without Accuracy Loss

Here's the deal: Google Launches Gemini 3.1 Flash Live via the Gemini Live API in Google AI Studio. The model is designed to let developers build conversational agents that process audio, video and text inputs and respond at conversational speed.

The Breakdown:

The model improves latency, instruction following and task completion over previous versions including 2.5 Flash Native Audio.

Better background noise filtering allows agents to function reliably in noisy, real-world environments like streets or rooms with TVs on.

Supports 90+ languages for real-time multimodal conversations.

Complex system instruction adherence has been improved, keeping agents within defined guardrails even during unpredictable conversations.

Partner integrations support WebRTC scaling and global edge routing for production deployment.

Early adopters include Stitch (voice-driven design), Hey Ato (AI companion for older adults) and Wits End (RPG game master with theatrical delivery).

The bigger picture: This gives developers a production grade foundation for voice-first AI applications where latency and reliability have historically been blockers. The multilingual and multimodal support widens the addressable use case surface significantly.

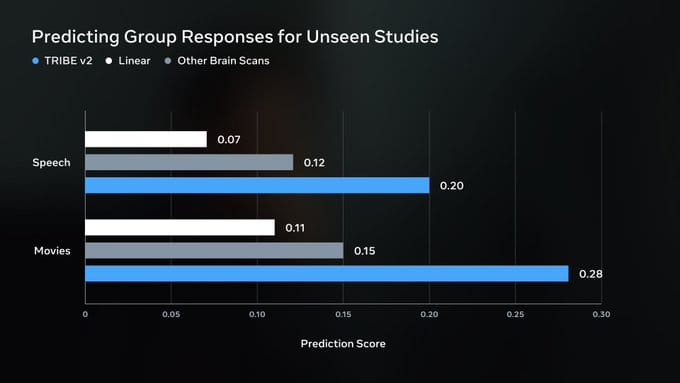

Here's the deal: Meta researchers released TRIBE v2, a tri-modal foundation model trained on over 1,000 hours of fMRI data from 720 subjects. It predicts high-resolution human brain responses to video, audio and language stimuli, outperforming traditional linear encoding models by several-fold in accuracy.

The Breakdown:

TRIBE v2 handles naturalistic and experimental conditions across three modalities (video, audio, language) in a single unified model replacing the fragmented single-paradigm approaches that have defined cognitive neuroscience.

The model enables in-silico experimentation, meaning researchers can simulate brain responses computationally instead of running costly fMRI studies. Tested against seminal visual and neuro-linguistic paradigms, it reproduced results established by decades of empirical work.

It extracts interpretable latent features that reveal fine-grained topography of multisensory integration, mapping how the brain combines inputs across senses.

The paper is published on ArXiv, led by researchers including Jean Remi King.

The bigger picture: This positions AI as a practical tool for neuroscience research at scale, potentially compressing years of brain-imaging experiments into computational simulations. Neuroscience labs and brain-computer interface teams stand to benefit most.

Here's the deal: Google introduced TurboQuant, a quantization algorithm being presented at ICLR 2026, that compresses large language model key-value caches and vector search indices while eliminating the memory overhead that traditional quantization methods carry.

The Breakdown:

TurboQuant combines two sub-algorithms: PolarQuant (converts vectors to polar coordinates to skip expensive normalization) and QJL (a 1-bit error-correction step with zero memory overhead).

Achieves 3-bit KV cache quantization without training or fine-tuning, reducing memory by at least 6x.

4-bit TurboQuant hits up to 8x speedup over 32-bit unquantized keys on H100 GPUs.

Maintains perfect scores on needle-in-a-haystack benchmarks and outperforms baselines like KIVI, PQ, and RabbitQ on recall.

Tested across LongBench, ZeroSCROLLS, RULER and L-Eval using Gemma and Mistral models.

PolarQuant will be presented separately at AISTATS 2026.

The bigger picture: Cheaper KV caches directly lower the cost of serving long-context LLM queries at scale. For vector search, near-lossless 3-bit compression means faster index building and retrieval, which is critical as semantic search workloads grow across Google's infrastructure and beyond.

88% resolved. 22% stayed loyal. What went wrong?

That's the AI paradox hiding in your CX stack. Tickets close. Customers leave. And most teams don't see it coming because they're measuring the wrong things.

Efficiency metrics look great on paper. Handle time down. Containment rate up. But customer loyalty? That's a different story — and it's one your current dashboards probably aren't telling you.

Gladly's 2026 Customer Expectations Report surveyed thousands of real consumers to find out exactly where AI-powered service breaks trust, and what separates the platforms that drive retention from the ones that quietly erode it.

If you're architecting the CX stack, this is the data you need to build it right. Not just fast. Not just cheap. Built to last.

What else you need to know:

OpenAI launches a Codex use case gallery featuring practical coding and non-coding task examples, with starter prompts users can open directly inside the Codex app.

Meta released SAM 3.1, a drop-in update to SAM 3 that adds object multiplexing to improve video processing efficiency while maintaining accuracy on smaller hardware.

Z.ai expanded GLM-5.1 access to all GLM Coding Plan subscribers, with the model scoring 45.3 on a coding benchmark, up from GLM-5's 35.4, trailing Claude Opus 4.6's 47.9.

Cursor launches self-hosted cloud agents for enterprises, letting teams run autonomous coding agents entirely within their own infrastructure without code or build artifacts leaving their environment.

Google launches memory and chat history import tools for Gemini, letting users transfer preferences and past conversations from other AI apps via copy-paste or ZIP file upload.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.