Hello from The AI Night,

Today in AI:

Google Upgrades Gemini 3 Deep Think with Record Setting Reasoning Benchmarks

Zhipu AI launches GLM-5, Largest Open Weight Model for Vibe Coding

Manus AI Introduces Lockable, Reusable Skill Libraries for Teams

Image Source: Google blog

Here's the deal: Google released a major upgrade to Gemini 3 Deep Think, its specialized reasoning mode built for science, research and engineering. It's now available to Google AI Ultra subscribers in the Gemini app and, for the first time, via the Gemini API through an early access program.

The Breakdown:

Scores 48.4% on Humanity's Last Exam (without tools), setting a new high for frontier models

Hits 84.6% on ARC-AGI-2, verified by the ARC Prize Foundation

Reaches 3455 Elo on Codeforces competitive programming

Achieves gold medal level on the 2025 International Math Olympiads and excels on Physics and Chemistry.

Scores 50.5% on CMT-Benchmark for advanced theoretical physics

A Rutgers mathematician used it to catch a subtle logical flaw in a peer reviewed math paper

Practical applications include converting sketches into 3D-printable files

The bigger picture: Deep Think positions Google at the top of reasoning benchmarks across math, code, and science simultaneously. API access opens the door for researchers and engineers to integrate frontier level reasoning directly into their workflows

Image Source: Ziphu AI blog

Here's the deal: Zhipu AI released GLM-5, a 744B-parameter MOE model (40B active) trained on 28.5T tokens. It is open sourced under the MIT License and targets complex systems engineering and long horizon agentic tasks.

The Breakdown:

Scales from GLM-4.5's 355B parameters (32B active) to 744B (40B active), with pre-training data increasing from 23T to 28.5T tokens

Integrates DeepSeek Sparse Attention to reduce deployment cost while preserving long context capacity

Introduces "slime," a new asynchronous RL infrastructure for more efficient post-training

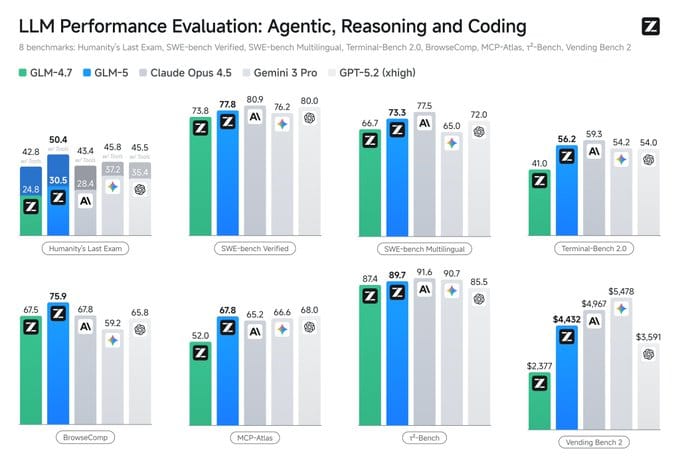

Ranks #1 among open-source models on Vending Bench 2 (long-term planning), finishing with $4,432 vs. Claude Opus 4.5's $4,967

Scores 75.9 on BrowseComp, 67.8 on MCP-Atlas and 77.8 on SWE-bench Verified, leading open source peers

On CC-Bench-V2, GLM-5 hits 98% frontend build success rate vs. Claude Opus 4.5's 93%

Compatible with Claude Code, OpenClaw and other coding agents. Weights available on Hugging Face

The bigger picture: GLM-5 is the strongest open source contender for agentic coding and long horizon tasks, narrowing the gap to frontier closed models. For builders who want local deployment or cost control on complex engineering workflows, this changes the calculus.

Image Source: Manus AI blog

Here's the deal: Manus AI released Project Skills, a feature that lets teams build curated skill libraries tied to specific projects. Each project becomes a self contained workspace where only its assigned skills can trigger during tasks.

The Breakdown:

Teams can pull skills from three sources, organization wide "Team Skills," personal libraries, or Manus provided official skill packs

When a task runs inside a project, only skills explicitly added to that project's library are available. Skills outside the library will not fire even if needed

A lock feature lets project owners freeze the skill set, preventing edits from other members and creating a standardized "gold-standard" workflow

New team members inherit the full skill setup the moment they join a project, replacing traditional onboarding docs

Available to all Manus users starting today

The bigger picture: This positions Manus as an operational layer not just a task runner. For teams scaling repeatable AI workflows, Project Skills reduces onboarding friction and enforces process consistency, turning individual expertise into an organizational asset that compounds over time.

What else you need to know:

Anthropic is donating $20 million to Public First Action, a new bipartisan 501(c)(4) advocating for AI transparency safeguards, federal governance frameworks and export controls on AI chips.

Cursor opened its long running agents research preview to Ultra, Teams and Enterprise users, allowing autonomous coding sessions lasting up to 52 hours that produce large production-ready PRs with minimal follow up.

Google DeepMind published results showing Gemini Deep Think's Aletheia agent can autonomously solve research level math problems and generate publishable papers while resolving long standing conjectures in physics and CS.

Uber Eats launched Cart Assistant, an AI feature that lets users type lists or upload images of handwritten lists to automatically populate grocery baskets based on availability and past orders.

Cursor tripled Composer 1.5 usage limits and introduced separate agent and API billing pools for individual subscribers, with its model outscoring Claude Sonnet 4.5 on Terminal-Bench 2.0.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.