Hello from The AI Night,

Today in AI:

OpenAI Announced Unified AI Super App After Closing $122B Funding Round

Anthropic Launches NO_FLICKER Mode for Claude Code

Anthropic Just Made Memory Available On Their Free Plan

Here's the deal: OpenAI announced Super App and it has closed a $122 billion funding round at a post-money valuation of $852 billion. The round was anchored by Amazon, NVIDIA, SoftBank, and Microsoft with co-leads including a16z, D. E. Shaw Ventures, MGX and TPG.

The Breakdown:

Revenue has hit $2 billion per month, up from $1 billion per quarter at end of 2024.

ChatGPT now has over 900 million weekly active users and 50 million subscribers.

Enterprise makes up over 40% of revenue, on track for consumer parity by end of 2026.

APIs process more than 15 billion tokens per minute.

Codex has 2 million weekly users, up 5x in three months.

OpenAI expanded its credit facility to $4.7 billion across 11 global banks.

For the first time, over $3 billion was raised from individual investors through bank channels.

ARK Invest will include OpenAI in several ETFs.

The bigger picture: This is the largest private funding round in tech history, signaling that institutional capital views OpenAI as core AI infrastructure not just a model provider. The scale of compute investment and enterprise traction reinforces a flywheel where better models drive adoption, which funds the next generation of infrastructure.

Here's the deal: Anthropic released an experimental NO_FLICKER renderer for Claude Code that virtualizes the terminal viewport to eliminate screen flickering. Users can enable it by running CLAUDE_CODE_NO_FLICKER=1 claude. Most internal users at Anthropic already prefer it over the default renderer.

The Breakdown:

Standard terminal renderers use ANSI escape codes, which have no command to repaint a single row outside the viewport. The only fix is clearing the entire screen, causing flicker.

The new renderer virtualizes the full viewport and handles keyboard and mouse events at the application layer.

CPU and memory usage stay constant as conversations grow.

Mouse clicks now work in the terminal for cursor repositioning and interacting with UI elements.

Text selection excludes line numbers and UI chrome.

Native cmd-f and copy-paste no longer work. Replacements: ctrl+o then / for search, auto-copy on selection for paste.

Scroll physics still vary across devices and are being tuned.

The bigger picture: Developers running long Claude Code sessions face compounding flicker and resource consumption. This mode trades some native terminal shortcuts for a stable, app-like experience, addressing two of the most common complaints about terminal-based AI coding tools.

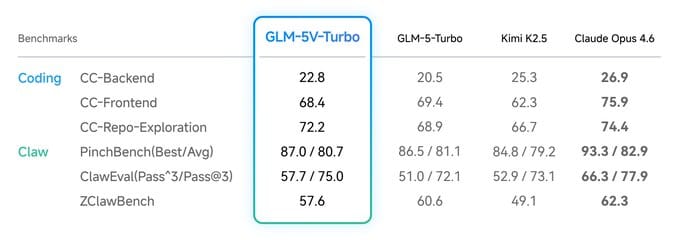

Here's the deal: Zhipu AI released GLM-5V-Turbo, a multimodal model built to understand images, videos, design drafts, and document layouts, then generate functional code from what it sees. The model is live at chat.z.ai with API access available.

The Breakdown:

Natively fuses text and vision from pre-training using a new CogViT visual encoder that hits state-of-the-art on object recognition, fine-grained understanding, and spatial perception.

Leads benchmarks in design draft reconstruction, visual code generation, multimodal retrieval, and GUI agent tasks including AndroidWorld and WebVoyager.

Pure-text coding performance stays stable across CC-Bench-V2 (backend, frontend, repo exploration), meaning visual capabilities do not degrade text reasoning.

RL training covers 30+ task types simultaneously (STEM, grounding, video, GUI agents) to reduce instability from single-domain optimization.

Supports multimodal tool use including visual search, drawing, and web reading, with explicit integration for Claude Code and OpenClaw agent workflows.

The bigger picture: This pushes coding assistants beyond text-only input. Developers and designers working from mockups, screenshots, or UI recordings now have a model purpose-built to close the gap between visual intent and working code.

AI Agents Are Reading Your Docs. Are You Ready?

Last month, 48% of visitors to documentation sites across Mintlify were AI agents, not humans.

Claude Code, Cursor, and other coding agents are becoming the actual customers reading your docs. And they read everything.

This changes what good documentation means. Humans skim and forgive gaps. Agents methodically check every endpoint, read every guide, and compare you against alternatives with zero fatigue.

Your docs aren't just helping users anymore. They're your product's first interview with the machines deciding whether to recommend you.

That means: clear schema markup so agents can parse your content, real benchmarks instead of marketing fluff, open endpoints agents can actually test, and honest comparisons that emphasize strengths without hype.

Mintlify powers documentation for over 20,000 companies, reaching 100M+ people every year. We just raised a $45M Series B led by @a16z and @SalesforceVC to build the knowledge layer for the agent era.

What else you need to know:

Anthropic signed an MOU with the Australian government on AI safety research and committed AUD$3 million in API credits to four Australian research institutions focused on genomics, rare diseases, and computing education.

Perplexity added a "Navigate my taxes" option to its Computer feature, enabling its AI agent to assist users in preparing their federal tax returns directly through the platform.

Slack launched a built-in CRM for small businesses, powered by Salesforce, letting users manage contacts, track deals, and log activity through Slackbot prompts, included free with Business+ plans.

Tesla's FSD v14.3, which adds improved reasoning and reinforcement learning capabilities, has entered employee beta testing, with Elon Musk projecting a wide public release by week's end.

Codex reset usage limits across all plans after dashboards showed unexplained spikes in users hitting rate caps, while also banning a batch of fraudulent accounts to reclaim compute resources.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.