Hello from The AI Night,

Today in AI:

OpenAI Launches GPT-5.4 mini and nano

Manus Launches Its Desktop App “My Computer”

NVIDIA Announces DLSS 5, a Real-time Neural Rendering Model

Here's the deal: OpenAI released GPT-5.4 mini and nano, two smaller models built from GPT-5.4 and designed for high-volume, low-latency tasks. Both are available now with mini in the API, Codex, ChatGPT and nano in the API only.

The Breakdown:

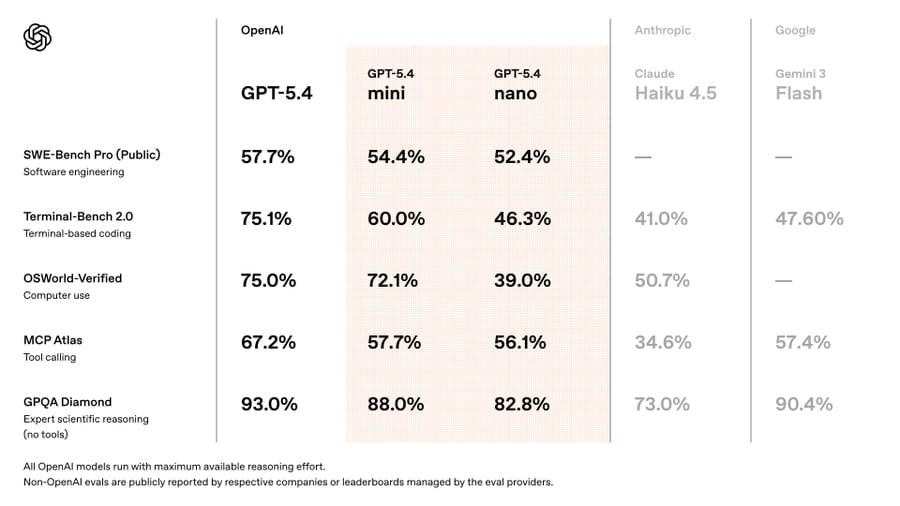

GPT-5.4 mini runs over 2x faster than GPT-5 mini and closes the gap with full GPT-5.4 on benchmarks like SWE-Bench Pro (54.4% vs 57.7%) and OSWorld-Verified (72.1% vs 75.0%).

Nano is the cheapest option at $0.20/1M input and $1.25/1M output tokens, built for classification, data extraction, and lightweight coding subagents.

Mini supports a 400K context window, tool use, function calling, web search, computer use, and image inputs at $0.75/1M input and $4.50/1M output tokens.

In Codex, mini uses only 30% of the GPT-5.4 quota. Codex can also delegate subtasks to mini subagents automatically.

Mini is available to free ChatGPT users through the Thinking feature.

The bigger picture: These models push the "right-size your model" strategy further. Developers building agentic systems can now pair GPT-5.4 for planning with mini or nano for parallel execution, cutting costs and latency without giving up much capability.

Here's the deal: Manus has launched "My Computer," a desktop app for macOS and Windows that lets its AI agent execute tasks directly on your local machine through command line instructions. The feature bridges cloud-based AI with local compute and workflows.

The Breakdown:

Manus operates by running terminal commands on your machine, enabling file management, app development and local automation.

Every command requires explicit user approval, with options for "Always Allow" or "Allow Once" per operation.

The agent can leverage local GPUs for model training or inference, turning idle hardware into a remote AI workstation.

It integrates with existing Manus features like Google Calendar, Gmail, scheduled tasks and custom agents, combining cloud services with local resources.

A demo showed Manus building a fully functional Swift app on a Mac in 20 minutes entirely through CLI with no manual coding.

The bigger picture: This positions Manus as one of the first general AI agents with sanctioned local machine access, a step beyond browser-based or cloud-sandboxed competitors. If the approval controls hold up, it sets a template for how desktop-level AI automation could ship safely.

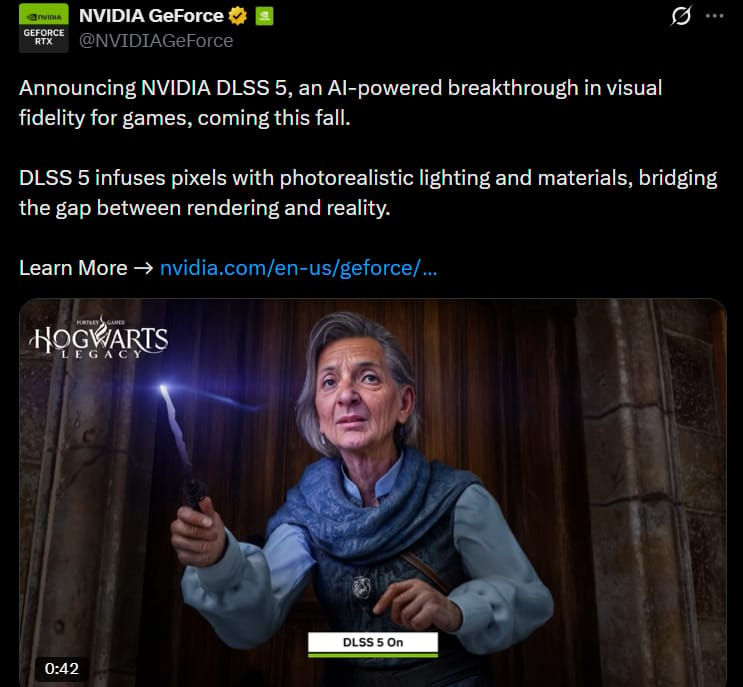

Here's the deal: NVIDIA announced DLSS 5, a real-time neural rendering model that applies AI-generated photoreal lighting and materials to game frames. It takes color and motion vectors as input and outputs enhanced visuals at up to 4K resolution, anchored to the developer's original 3D content.

The Breakdown:

DLSS 5 uses an AI model trained to understand scene semantics (characters, hair, fabric, skin, environmental lighting) from a single frame, then generates lighting effects like subsurface scattering and fabric sheen while preserving scene structure.

Unlike offline video AI models, DLSS 5 is deterministic and temporally stable, meaning consistent frame-to-frame output suitable for real-time gameplay.

Developers retain control over intensity, color grading, and masking through the existing NVIDIA Streamline framework.

Confirmed publisher support includes Bethesda, CAPCOM, Ubisoft, Tencent, and Warner Bros. Games, with titles like Starfield, Assassin's Creed Shadows, and Resident Evil Requiem.

DLSS 5 launches this fall, with a first preview shown at GTC this week.

The bigger picture: This shifts DLSS from a performance tool to a visual fidelity layer, narrowing the gap between real time game rendering and offline cinematic quality VFX. For developers, it means achieving near photoreal visuals without the compute budgets Hollywood pipelines require.

Your Docs Deserve Better Than You

Hate writing docs? Same.

Mintlify built something clever: swap "github.com" with "mintlify.com" in any public repo URL and get a fully structured, branded documentation site.

Under the hood, AI agents study your codebase before writing a single word. They scrape your README, pull brand colors, analyze your API surface, and build a structural plan first. The result? Docs that actually make sense, not the rambling, contradictory mess most AI generators spit out.

Parallel subagents then write each section simultaneously, slashing generation time nearly in half. A final validation sweep catches broken links and loose ends before you ever see it.

What used to take weeks of painful blank-page staring is now a few minutes of editing something that already exists.

Try it on any open-source project you love. You might be surprised how close to ready it already is.

What else you need to know:

OpenAI's Codex now supports subagent workflows, letting developers spawn parallel specialized agents for complex tasks like PR reviews, codebase exploration, and CSV batch processing.

NVIDIA launched Vera, its first CPU purpose-built for agentic AI, delivering twice the efficiency and 50% faster performance than traditional rack-scale CPUs, with availability in H2 2026.

xAI launched a Text to Speech API for Grok, giving developers access to natural voices and expressive audio controls for building voice-enabled applications.

Cursor open-sourced four security automation templates built on its Automations platform, deploying AI agents that reviewed thousands of PRs and blocked hundreds of vulnerabilities from reaching production over two months.

NVIDIA launched NemoClaw, an open source stack that adds policy-based privacy and security guardrails to OpenClaw, enabling anyone to deploy always-on autonomous agents locally with a single command.

Perplexity's Computer can now take full control of Comet, spinning up a browser agent that accesses any site or logged-in app without connectors or MCPs, available to all users.

Mistral AI joined NVIDIA's Nemotron Coalition as a founding member to co-develop open-source frontier models, combining Mistral's architectures with NVIDIA's compute and synthetic data pipelines.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.