Hello from The AI Night,

Today in AI:

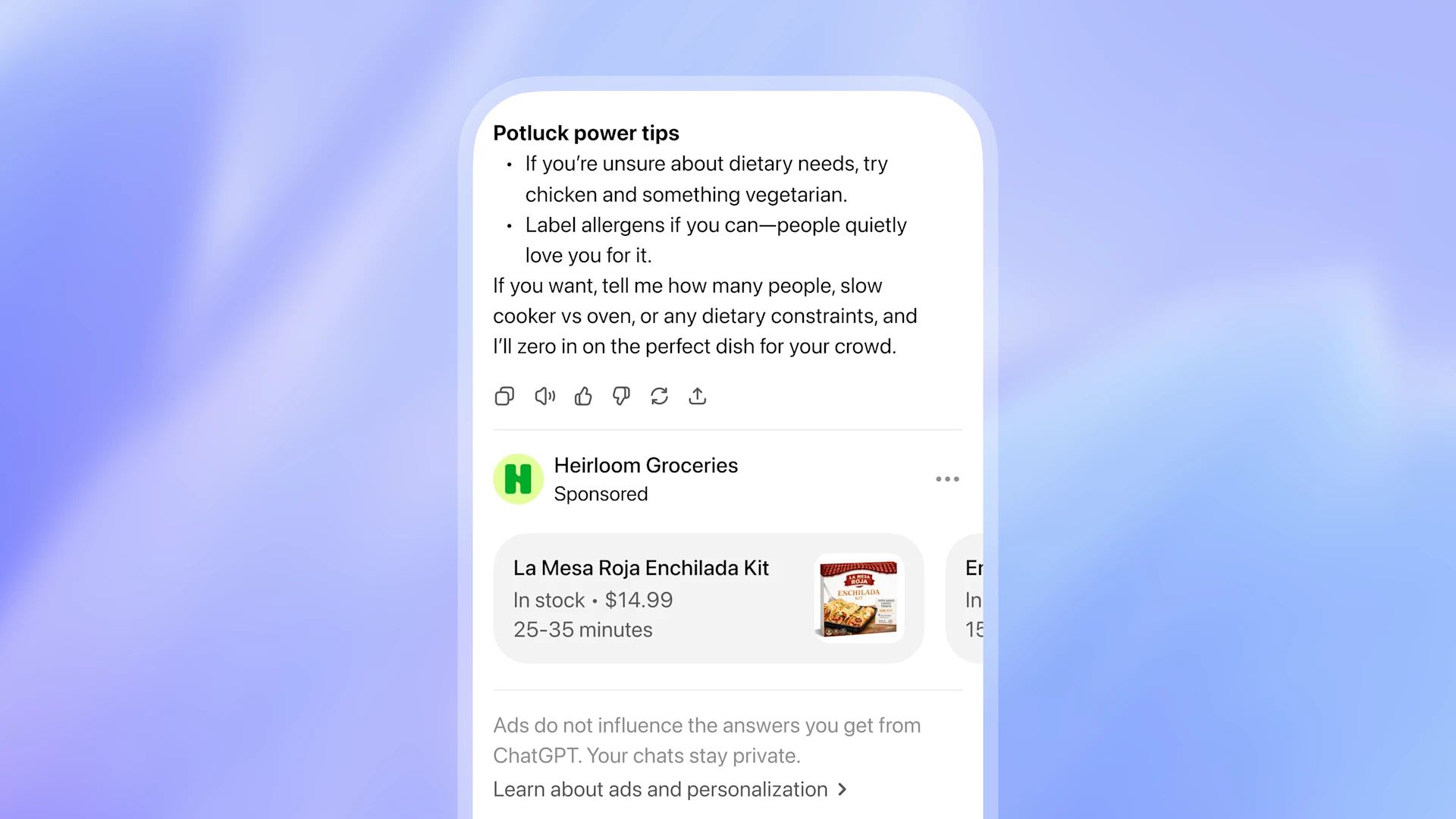

OpenAI Rolling out Ads Inside Chatgpt

Qwen Launches Image-2.0, a Unified Generation and Editing Model

Cursor Introducess Composer 1.5, Proving RL for Coding cales Predictably with 20x more Compute

Image Source: OpenAI blog

Here's the deal: OpenAI is rolling out its first ad test in ChatGPT for logged in adult users in the U.S. on the Free and Go tiers. Plus, Pro, Business, Enterprise and Education plans will remain ad free.

The Breakdown:

Ads are matched to users based on conversation topic, past chats and prior ad interactions not shared directly with advertisers.

Advertisers receive only aggregate data (views, clicks). They never see individual chats, memories or personal details.

Ads are visually labeled as "sponsored" and separated from organic answers. OpenAI states they do not influence ChatGPT's responses.

Users can dismiss ads, delete ad data, and toggle personalization off. Free-tier users can also opt out of ads in exchange for fewer daily messages.

No ads will appear for users under 18 or near sensitive topics like health, mental health or politics.

OpenAI plans to expand formats, objectives and buying models over time.

The bigger picture: This is OpenAI's first real step toward an ad-supported business model, signaling a shift in how AI assistants get monetized. For builders and marketers, it opens a new high intent ad surface where users are actively exploring decisions.

Image Source: Qwen blog

Here's the deal: Qwen released Qwen-Image-2.0, a 7B-parameter image generation model that merges its previously separate generation and editing tracks into a single architecture. The model supports 1k-token instructions and native 2K resolution output.

The Breakdown:

Handles complex, text heavy outputs like PPT slides, A/B testing report infographics, calendars with lunar notations and multi panel comics with dialogue in speech bubbles.

Supports multiple calligraphic styles including Running Script, Slender Gold Script and Small Regular Script with high character accuracy.

Achieves top performance on both text-to-image and image-to-image benchmarks in blind testing on AI Arena, using one model for both tasks.

Editing capabilities include adding styled calligraphy to photos, merging separate images of the same person with consistent lighting and placing 2D characters into real photos with correct perspective.

Smaller model size and faster inference compared to predecessors.

The bigger picture: A single 7B model that handles both generation and editing at this fidelity level lowers the barrier for teams building design automation, localized marketing assets, and structured visual content at scale.

Cursor

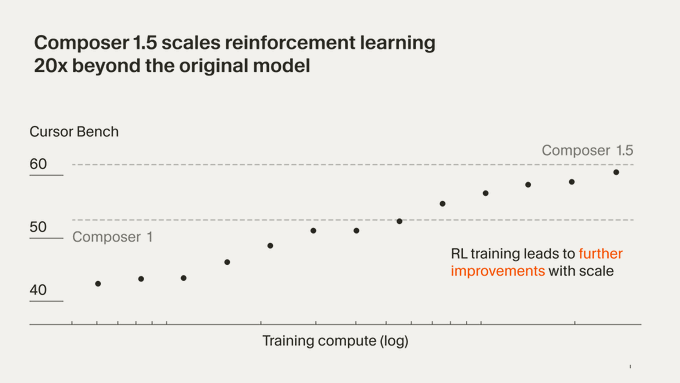

Cursor Introducess Composer 1.5, Proving RL for Coding cales Predictably with 20x more Compute

Image Source: Cursor blog

Here's the deal: Cursor released Composer 1.5, an upgraded agentic coding model trained by scaling reinforcement learning 20x beyond Composer 1 on the same pretrained base. The post-training compute for 1.5 actually exceeds the compute used to pretrain the base model itself.

The Breakdown:

Composer 1.5 is a thinking model that generates reasoning tokens before acting, planning steps across the user's codebase.

It adapts effort dynamically: minimal thinking on easy problems, extended reasoning on hard ones, keeping daily use fast.

A trained self-summarization capability lets the model compress its context mid-task and continue working, even triggering recursively on difficult problems.

Internal benchmarks show steady performance gains over Composer 1 as RL scales, with the largest improvements on challenging tasks.

Self-summarization preserves accuracy regardless of context length variation.

The bigger picture: Cursor is demonstrating that RL for coding scales predictably, not just for frontier lab models but for product-specific ones. Adaptive thinking depth and self summarization point toward agentic coding tools that handle longer, harder tasks without sacrificing speed on routine work.

World’s First Safe AI-Native Browser

AI should work for you, not the other way around. Yet most AI tools still make you do the work first—explaining context, rewriting prompts, and starting over again and again.

Norton Neo is different. It is the world’s first safe AI-native browser, built to understand what you’re doing as you browse, search, and work—so you don’t lose value to endless prompting. You can prompt Neo when you want, but you don’t have to over-explain—Neo already has the context.

Why Neo is different

Context-aware AI that reduces prompting

Privacy and security built into the browser

Configurable memory — you control what’s remembered

As AI gets more powerful, Neo is built to make it useful, trustworthy, and friction-light.

What else you need to know:

OpenAI began rolling out GPT-5.2 to power ChatGPT's deep research feature, upgrading the underlying model with additional improvements starting today.

Runway raised $315 million in Series E funding led by General Atlantic with participation from NVIDIA and Adobe Ventures to pre-train its next generation world models.

ElevenLabs introduced Expressive Mode for its ElevenAgents platform, combining Eleven v3 Conversational TTS with a new turn-taking system to deliver more emotionally aware voice agents in 70+ languages.

NVIDIA and Docusign are collaborating on Nemotron Parse integration to extract tables, text and metadata from complex contracts, turning agreement repositories into structured data for AI workflows.

NVIDIA and Samsung announced plans to build an AI factory with 50,000 GPUs for semiconductor manufacturing, achieving 20x gains in computational lithography through NVIDIA cuLitho integration.

That’s it for today’s edition of The AI Night.

Our goal is to cut through the noise, surface what actually changed, and explain why it matters.

If this was useful, you’ll get the same signal here tomorrow.